Though invisible to most users, APIs are the backbone of modern web applications. Developers love them because they facilitate complex integrations between systems and services. The business loves them because integrating disparate systems to create new products and services drives innovation and growth.

The challenge with this transformative connectivity is the dependencies that exist between systems. API failure can result in performance degradation, data anomalies, or even system-wide outages. That’s why API monitoring is emerging as one of the industry’s primary concerns in 2021 and beyond.

The Challenges of Monitoring APIs

APIs are rapidly evolving as adoption continues to grow across industries, but companies are still facing challenges in adopting strategies to monitor and maintain this critical technology. Common issues include performance, balancing response time and reliability constraints, and data quality.

While APIs are designed to solve complex problems, new complexities can manifest themselves in the management and monitoring of the APIs. Here are some of the most significant pain points:

The Black Box

In software development, a ‘black box’ refers to software whose inner workings are kept private and unexposed to the interfaces it services. One of the primary benefits of API-oriented architectures like microservices is that two systems or services can exchange data without either side understanding the inner workings of the other. However, this can create challenges when issues emerge in testing or production. The difficulty is often in determining whether a case is associated with one of the APIs or the applications they are servicing.

Multiple, Siloed Data Sources

Another strength of APIs is integrating disparate applications and sources of data to form a new application that adds value in its own right for users or the business. From the API’s perspective, call sequencing, input parameters, and parameter combinations all play a role in how that data will be processed and passed into an application. A complex dance is involved to ensure the data from all of these different sources are processed correctly. It takes an equally sophisticated monitoring framework to observe these interactions.

Overhead

For many applications, response time is a critical factor for the user experience. As a result, some monitoring solutions can impact API performance and degrade the user experience. Overhead concerns are not limited to software performance. Operations teams are often the most overburdened members of the staff in terms of workload. Performing analytical tasks to understand the information coming from monitoring tools can exacerbate this problem, especially if false positives get out of control.

Lack of Context/Actionable Data

The work of APIs is inherently technical in nature. As a result, it can be challenging to relate data on API availability and performance back to value streams within IT and the business.

API Monitoring Best Practices

While the best practices for API monitoring sometimes vary by industry or software categories, there are a handful of strategies that all organizations should practice.

Continuous Monitoring

The DevSecOps world brought continuous monitoring to cybersecurity with processes and dedicated tools to constantly assess software systems for vulnerabilities. Organizations should consider their APIs as critical as software vulnerabilities and infrastructure availability. Assess APIs 24/7, 365 days a year, with multi-step calls that simulate internal and external interfacing.

Push monitoring beyond availability and performance

API failure and response time degradations can have a massive impact on the user experience and the business. But what about data and functional correctness? Even if APIs are available and responsive, it doesn’t mean they are performing correctly. Data anomalies can have a tremendous impact on the quality of an application and the reputation of a business.

Consumability

Data generated by an API monitoring tool must be consumable by human operators and systems configured for an automated response. In addition, data should be aggregated and visualized, preferably with actionable insights to reduce resolution times and minimize operator burden when problems occur. If monitoring tools are too difficult to use, operators won’t have time to take advantage of all of the benefits.

Contextual Awareness for Business

For monitoring to deliver value to end-users and the business, it requires context. There needs to be an established baseline of normal behavior and usage patterns and an understanding of how things like seasonality or holidays impact that behavior. This type of information empowers developers and system architects to optimize APIs for the peaks and valleys that businesses deal with every year.

API Monitoring with Anodot

The exponential growth of web services in the last decade was driven mainly by the proliferation of APIs. Today, they form the backbone of digital transformation and modern application development. For this reason, API monitoring is just as critical as keeping tabs on servers and infrastructure.

Autonomous API Monitoring is a game-changer for businesses that need to go further than monitoring API availability and push towards continuously improving their users’ experience. The system is simple to set up, with built-in connectors that allow application code to send events directly to a web application. It can learn the expected behavior of all critical application metrics within minutes and immediately start monitoring for anomalous behavior at every endpoint, including latency, response time, error rates, and activity limits.

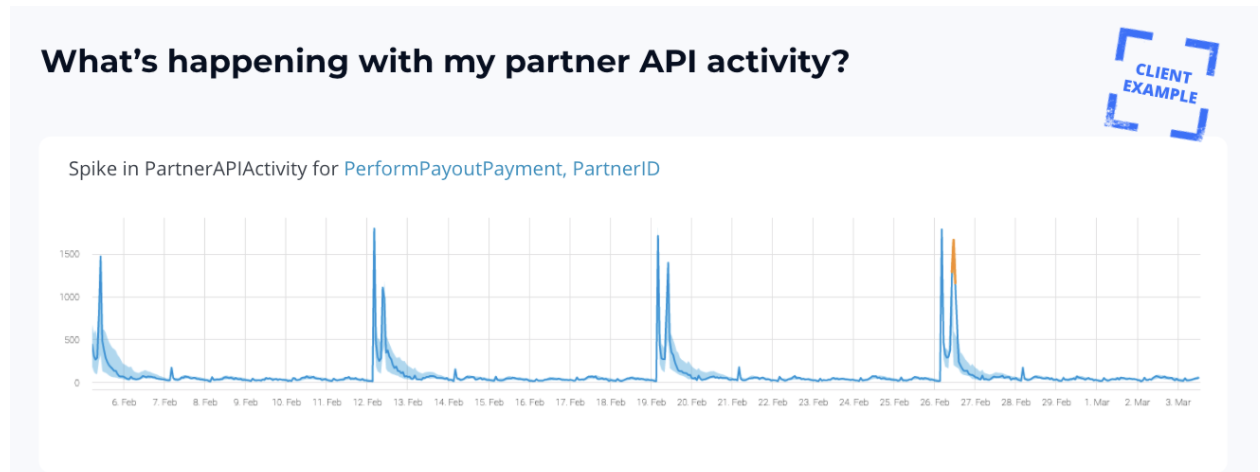

A fintech client using Anodot’s Autonomous Business Monitoring platform observed a spike in activity with an external API partner that the system leveraged for payouts, indicating a potential for fraud, churn, or a compromised account. Because Anodot automatically monitors all API data in real time, the incident was picked up instantly and the customer was alerted. As a result, the customer could intervene and forward it to their fraud team for investigation before it was too late to prevent further damage.

It isn’t sufficient for API monitoring solutions to identify and alert performance degradations and data anomalies. By this point, the damage to critical systems and the business are already happening, with breaches to Service Level Agreements being one of the principal concerns. Machine learning empowers API monitoring solutions to identify and understand normal behavior across the application stack so operators can address issues with functionality, performance, correctness, and speed before they impact critical systems.